|

Xuan (Lily) Yang

I am Xuan (Lily) Yang (杨萱), a PhD student in Computer Science at Duke

University, advised by Prof.

Jian Pei. I received my bachelor's and master's degrees from Zhejiang

University (ZJU), where I collaborated with Prof.

Yang Yang. I have interned at TikTok, working on multi-agent

systems, and visited Stanford and NUS as a research intern.

My research focuses on:

- Data-centric AI — data valuation, selection, and synthesis

for trustworthy and efficient models.

- LLM agents / multi-agent systems (MAS) — efficient inference

and multi-agent architecture optimization.

📌 Open to collaboration on data-centric AI / MAS projects · part-time internships.

Feel free to reach out — always happy to connect!

Email /

Google

Scholar /

Linkedin

|

|

|

May 2026

|

Excited to join Google as a Summer Intern.

|

|

Apr 2026

|

Batch-of-Thought accepted to

ACL 2026 (Oral).

|

|

Apr 2026

|

Wrapped up my internship at TikTok — had a wonderful time!

|

|

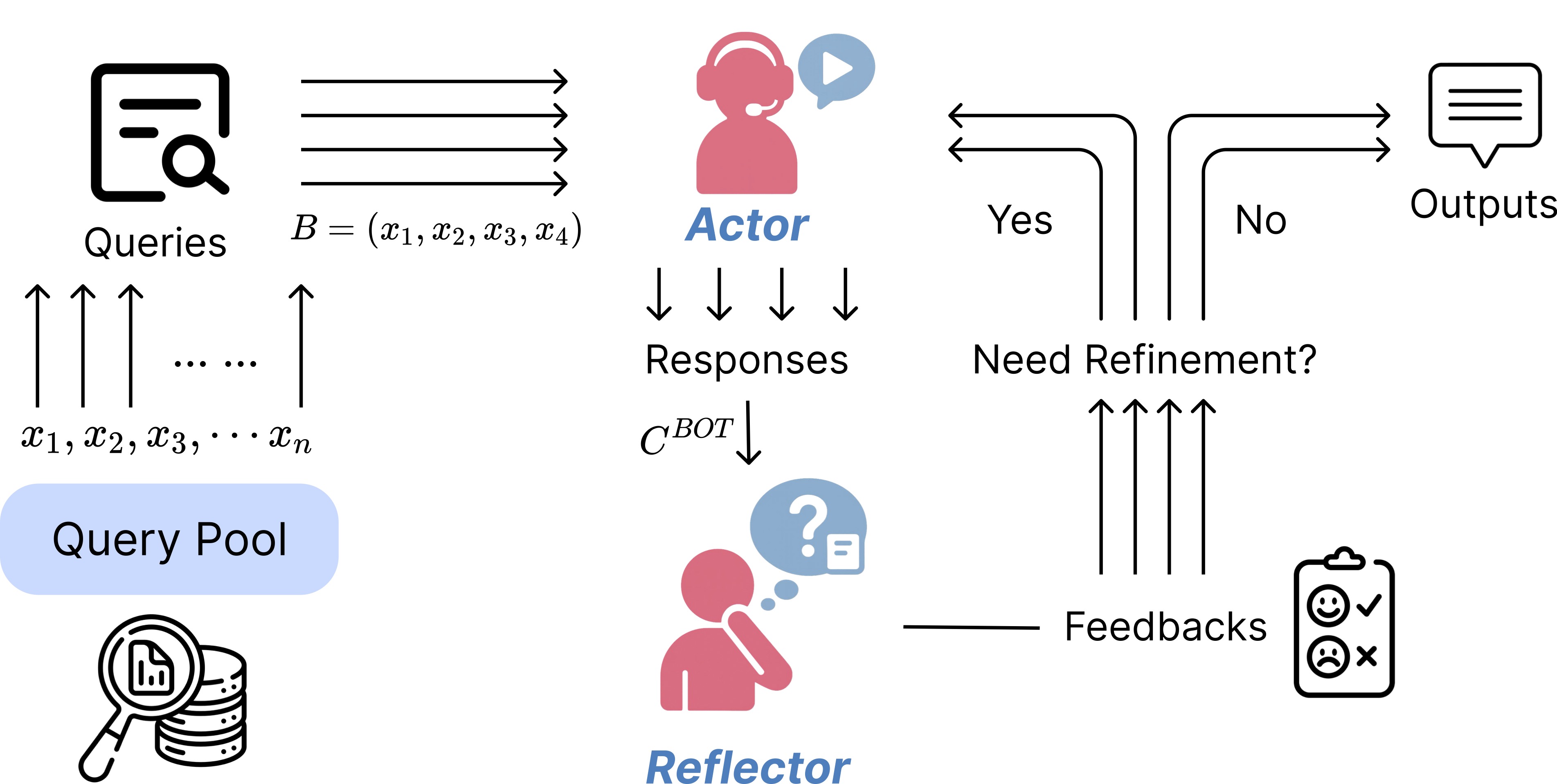

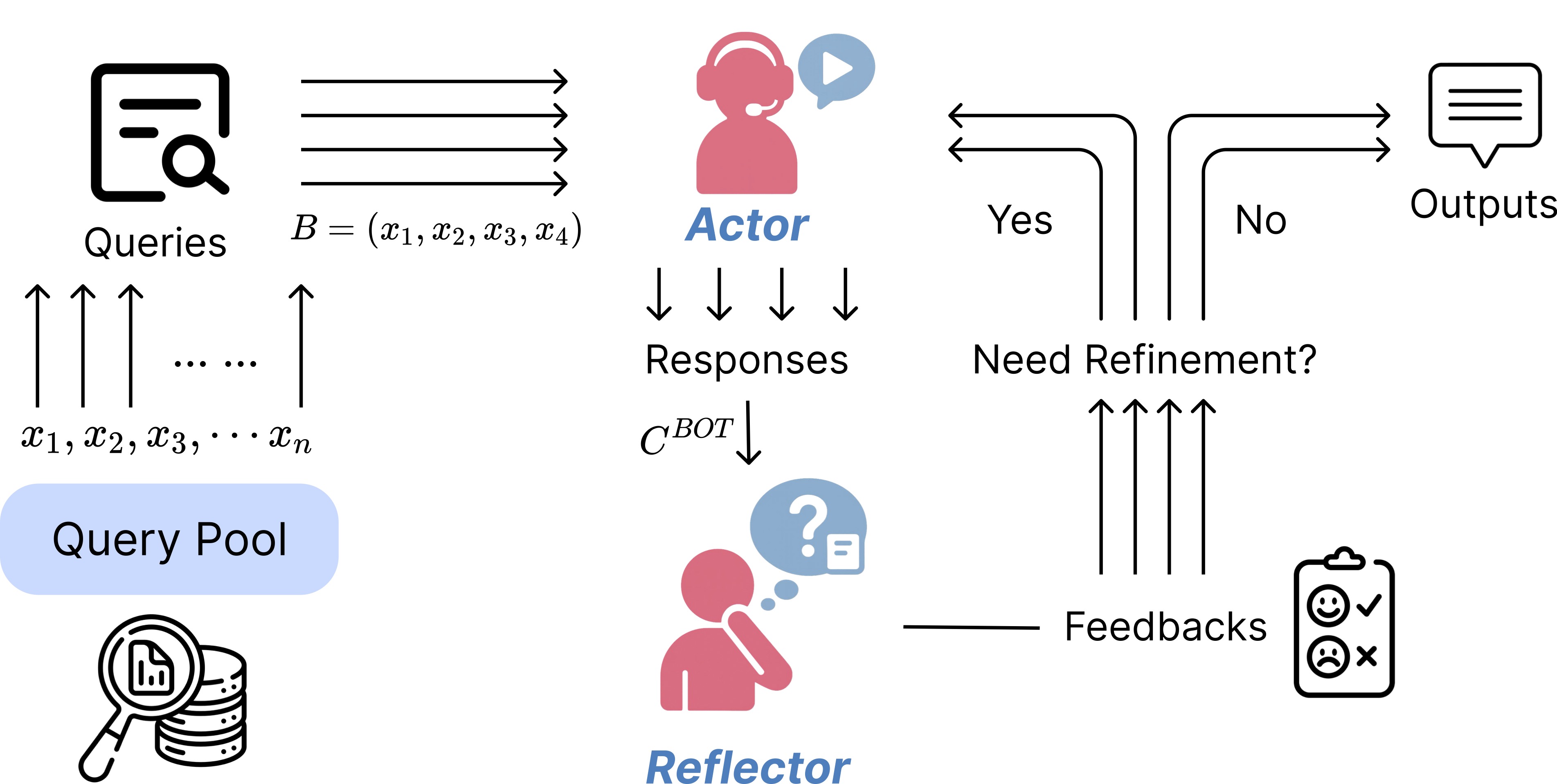

Batch-of-Thought: Cross-Instance

Learning for Enhanced LLM Reasoning

[Code]

Xuan Yang, Furong Jia, Roy Xie, Xiong Xi, Hengwei Bian, Jian Li, Monica Agrawal

ACL, 2026 (Oral)

We introduce Batch-of-Thought (BoT), a training-free method that processes

related queries jointly to identify shared reasoning templates and catch errors

through consistency checks, and BoT-Reflection (BoT-R), a multi-agent

architecture where a Reflector performs joint evaluation to unlock mutual

information unavailable in isolated inference. Experiments across three model

families and six benchmarks demonstrate that BoT-R consistently improves

accuracy and confidence calibration while reducing inference costs by up to 61%.

|

|

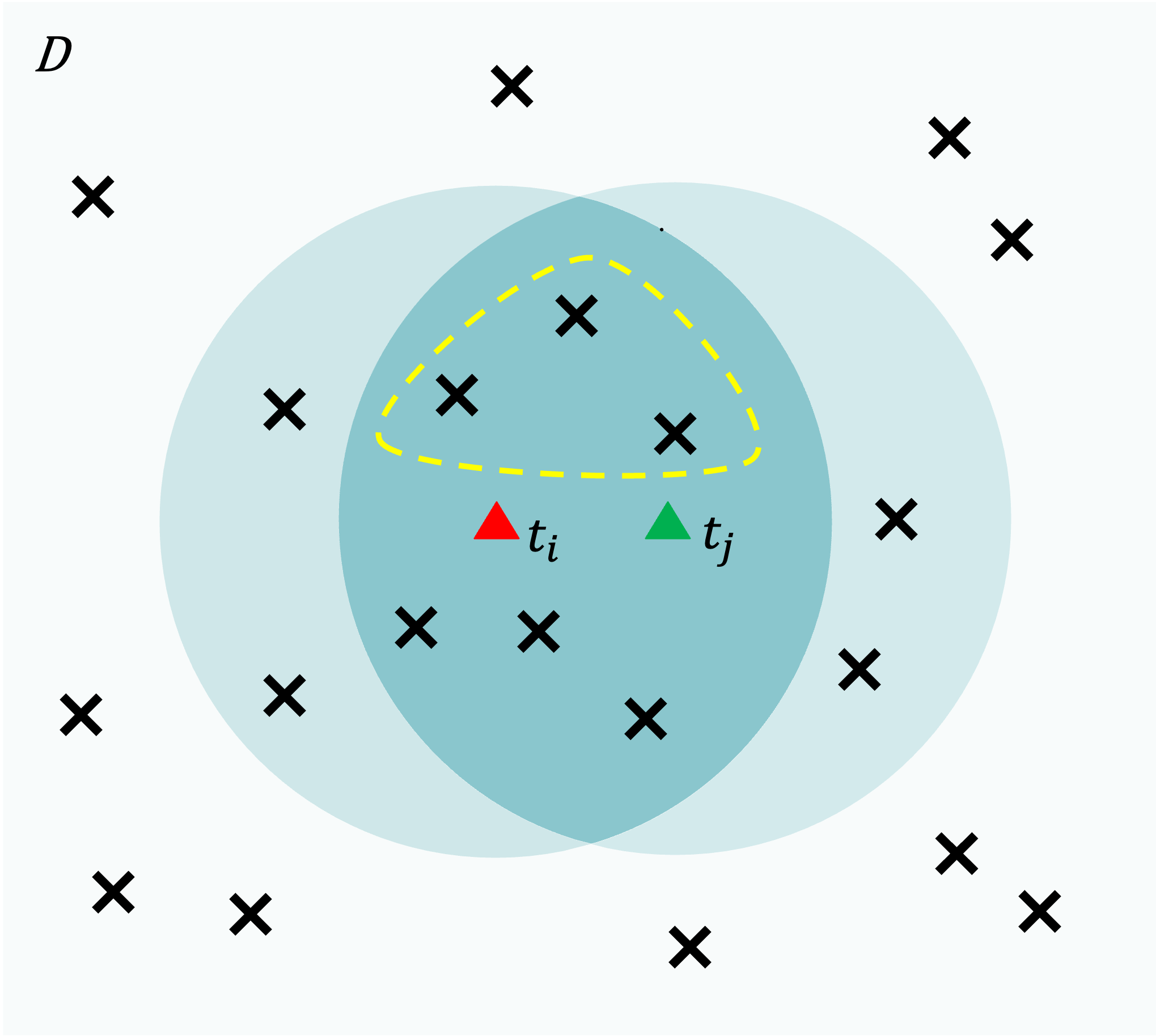

Local Shapley: Efficient Data Valuation for Model Training

[Code]

Xuan Yang, Hsi-Wen Chen, Ming-Syan Chen, Jian Pei

We propose Local Shapley, which formalizes model-induced locality through support

sets to focus Shapley computation on influential training points rather than

exhaustive coalition enumeration. We design and prove that LSMR achieves the

optimal number of model training runs by training each support exactly once;

LSMR-A extends this with an unbiased Monte Carlo estimator for larger supports.

Experiments across multiple model families demonstrate substantial retraining

reductions and speedups while preserving high valuation fidelity.

|

|

Unfolding and

Modeling the Recovery Process after COVID Lockdowns

[News]

Xuan Yang, Yang Yang, Chenhao Tan, Yinghe Lin, Zhengzhe Fu, Fei Wu,

Yueting Zhuang

Nature Scientific Reports, 2022

(Front-Page Feature, Zhejiang Daily)

We present a graph-learning–based computational framework leveraging electricity

consumption data to analyze post-lockdown recovery dynamics. Our approach

quantifies sector-specific impacts, evaluates the effectiveness of government

recovery policies, and models inter-sector dependencies to inform more effective

strategies for holistic economic revitalization.

|

|

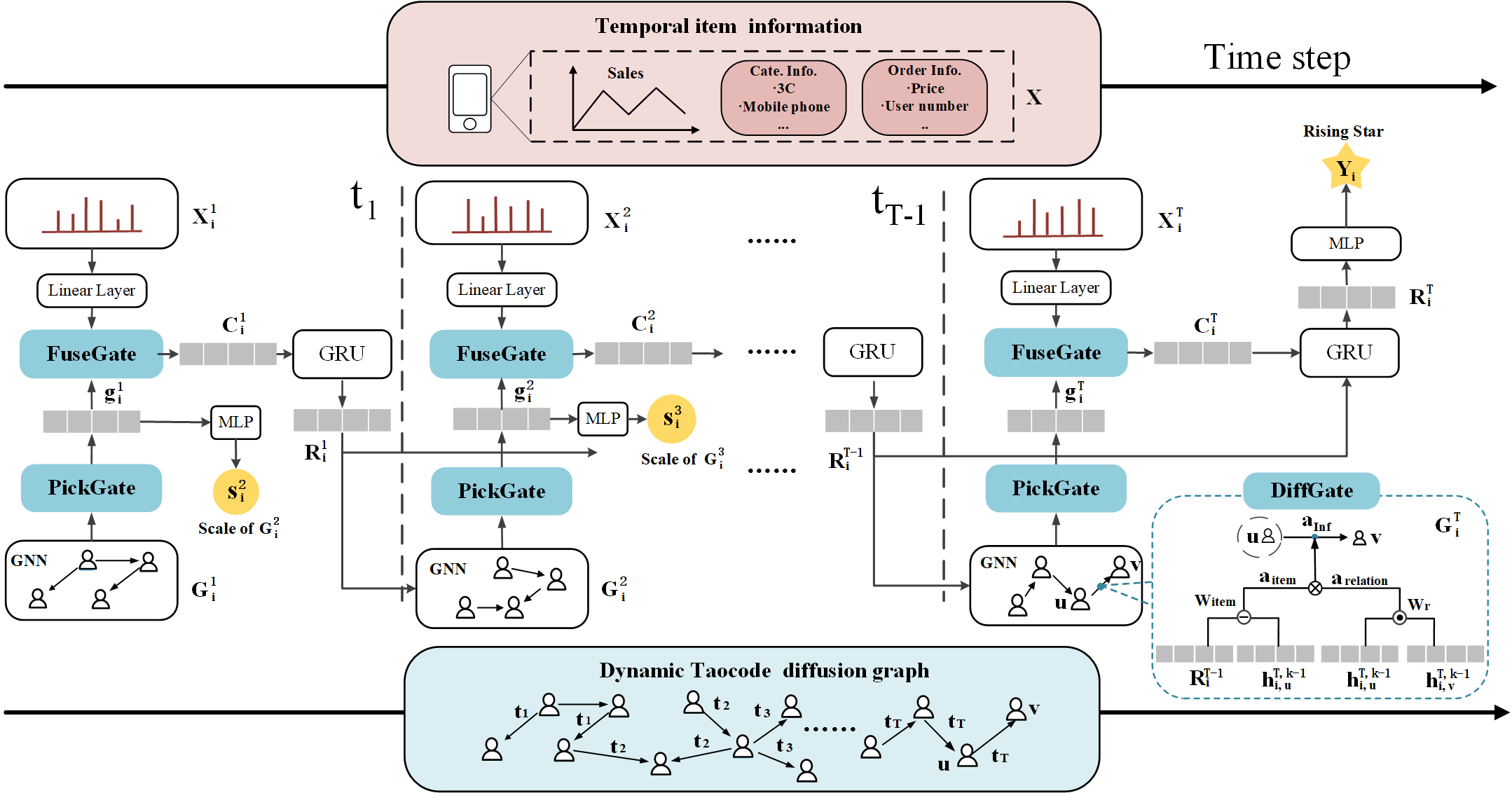

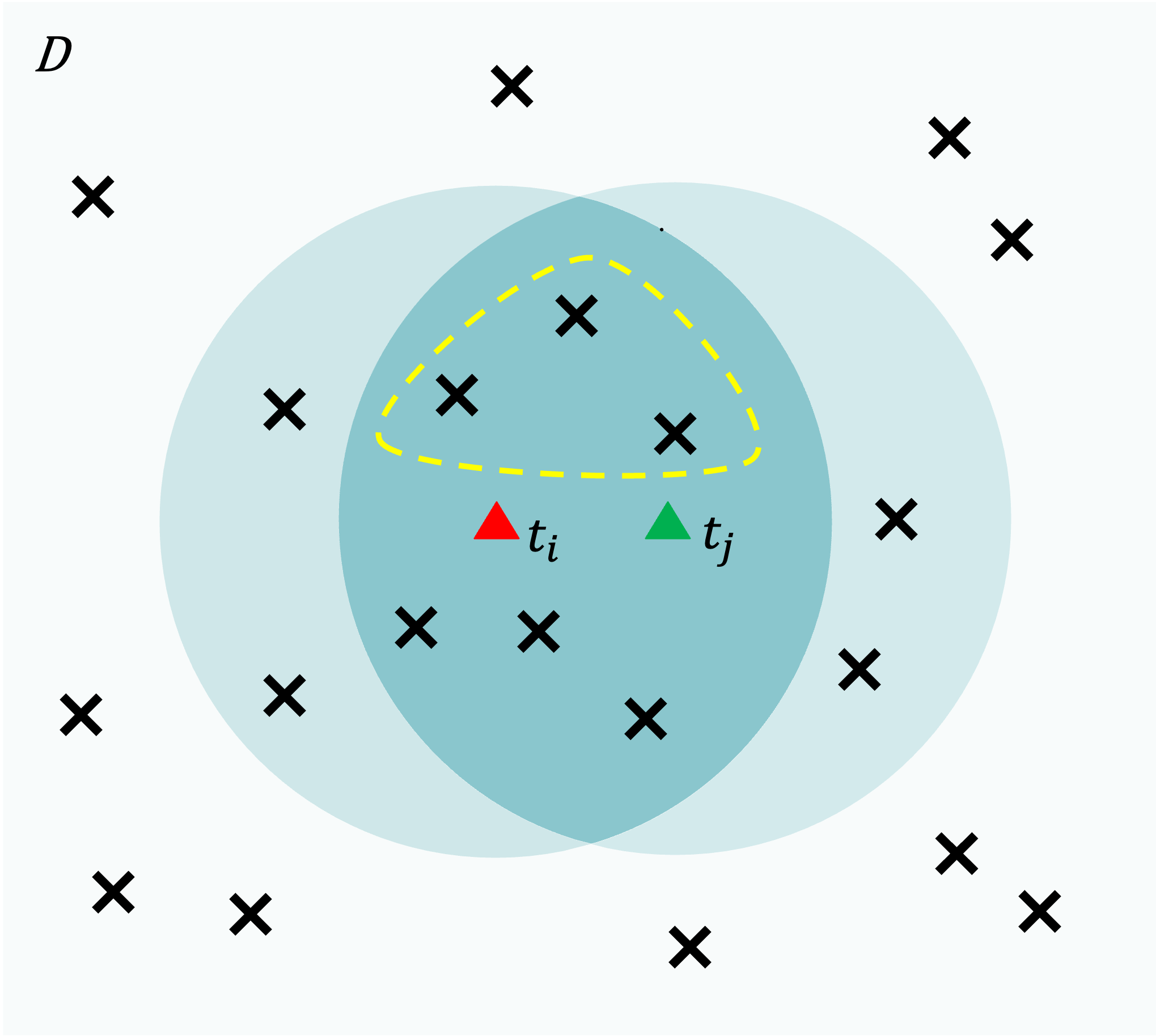

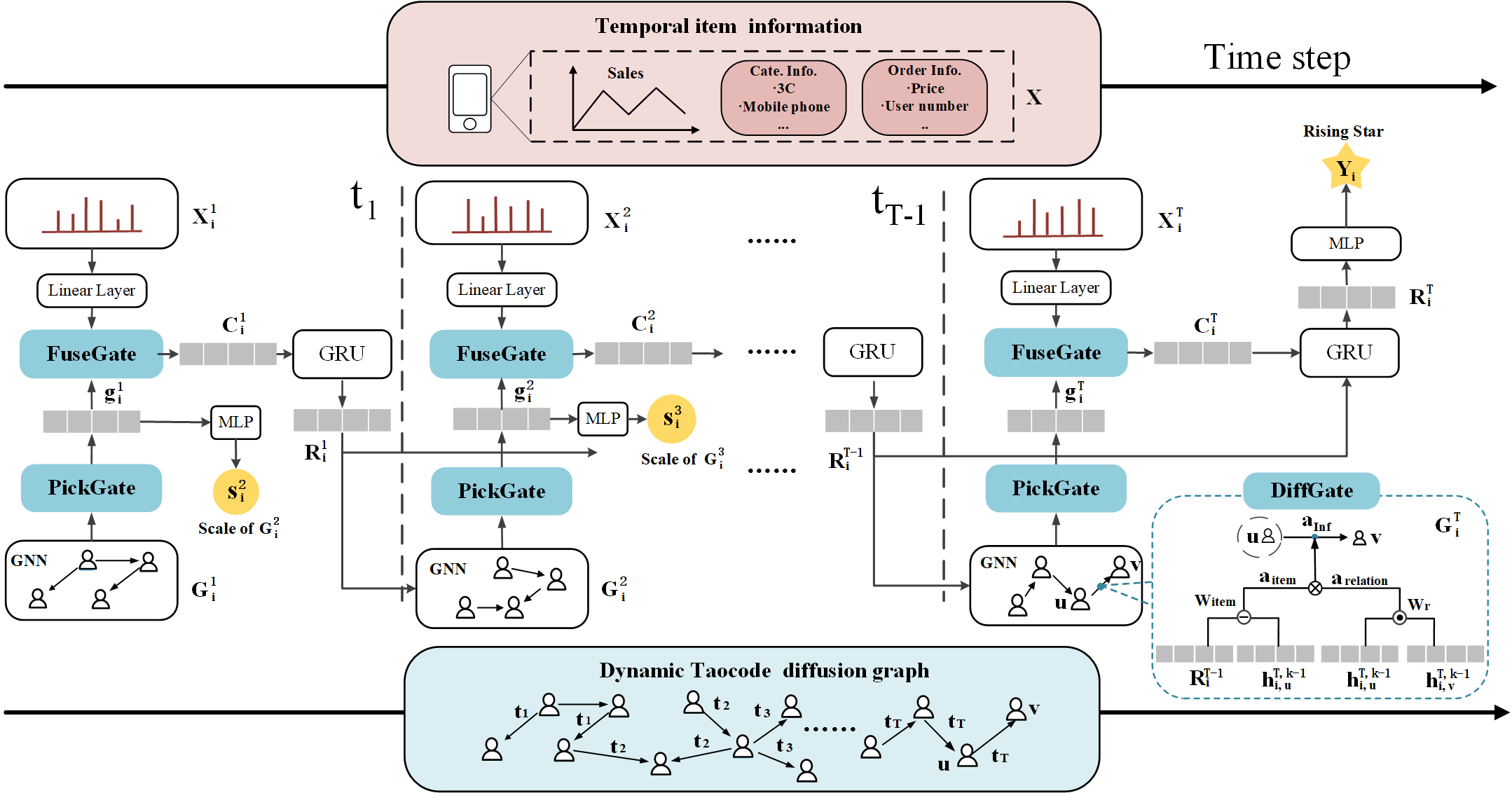

Who's Next: Rising Star

Prediction via Diffusion of User Interest in Social Networks

Xuan Yang, Yang Yang, Jintao Su, Yifei Sun, Shen Fan, Zhongyao Wang

IEEE Transactions on Knowledge and Data Engineering, 2022

We propose RiseNet, a novel recommendation framework designed to identify

potential “Rising Star” items and mitigate unfairness in recommendation systems.

RiseNet models the dynamic diffusion of user interests alongside temporal item

features, using a coupled mechanism to capture their interactions and a

multi-task GNN-based framework to quantify user interest. Experiments on

real-world Taobao data demonstrate its effectiveness in predicting emerging

popular items.

|

|

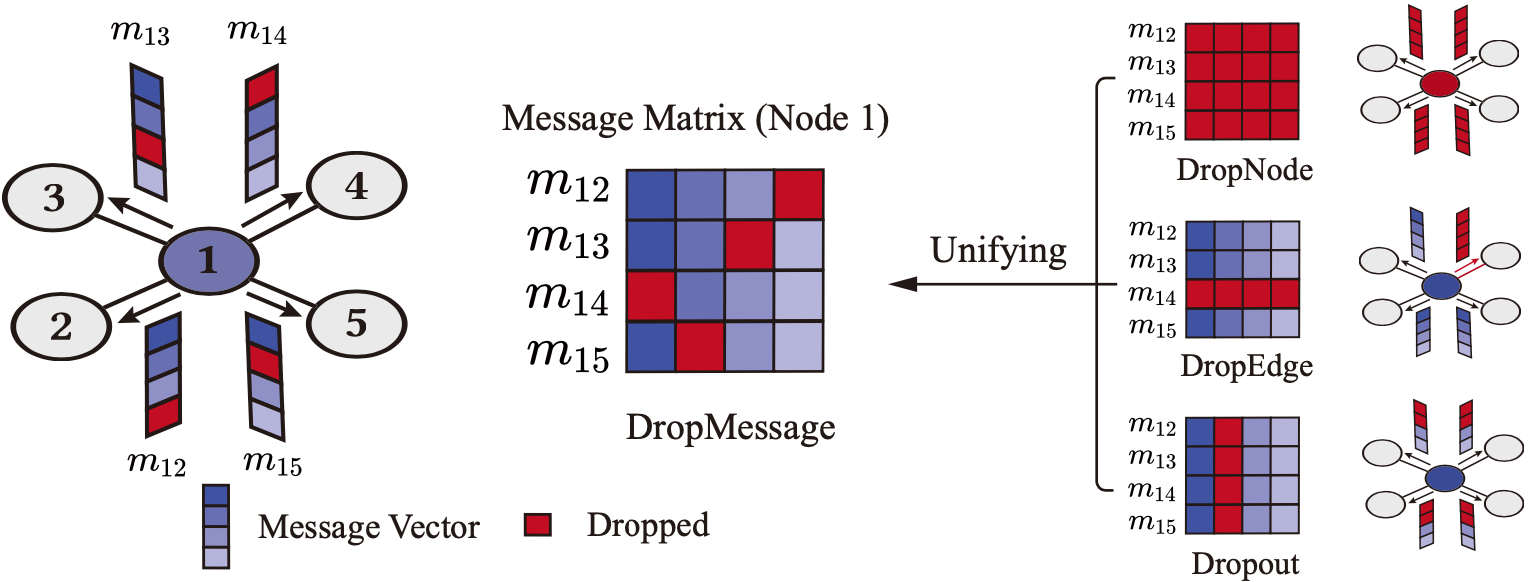

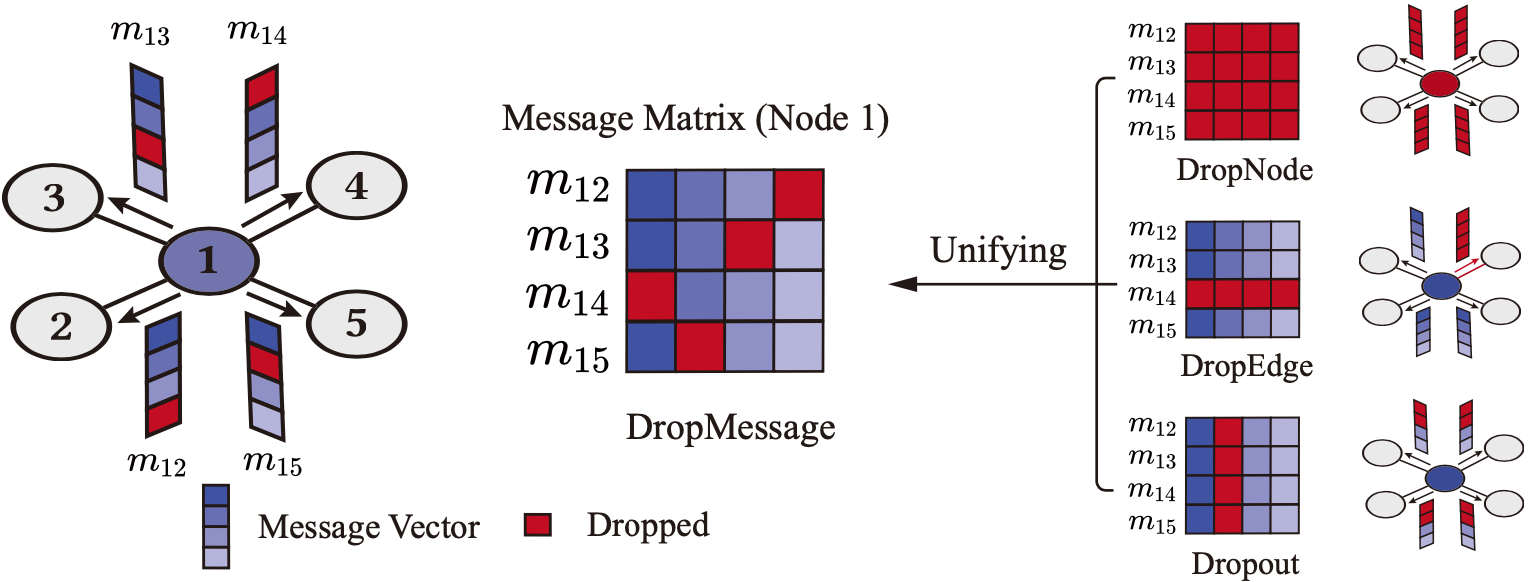

DropMessage: Unifying

Random Dropping for Graph Neural Networks

Taoran Fang, Zhiqing Xiao, Chunping Wang, Jiarong Xu, Xuan Yang, Yang

Yang

AAAI, 2023 (Distinguished Paper

Award)

We present a unified framework that generalizes existing random dropping

techniques by applying dropping operations to the message matrix in Graph Neural

Networks (GNNs). Building on this, we propose DropMessage, a versatile method

applicable to any message-passing GNN. Theoretically, DropMessage improves

training stability by reducing sample variance and enhances information

diversity from an information-theoretic perspective.

|

|

TikTok Bellevue, WA

Research Intern, Risk Control team

May 2025 -- Apr 2026

|

|

Alibaba Group Hangzhou, China

Research Intern, Data Assets and Algorithm team

Oct 2020 -- Dec 2021

|

|

Stanford University Palo Alto, CA

Research Assistant, Center for Magnetic Nanotechnology

Jan 2019 -- Mar 2019

|

|

National University of Singapore Singapore

Research Assistant, Big Brain, BIGHEART

Jun 2018 -- Aug 2018

|

|